StudentsPapers Review: 3-Deadline Stress Test Results

This review is written as a first-person field report. I logged every interaction I initiated with StudentsPapers.com: the questions I asked support, the clarification prompts I gave the writer, the revision demands I made on purpose (the kind you can’t fake with synonyms), and the verification checks I ran for originality and AI risk. My goal wasn’t perfection. My goal was predictability.

Pros & Cons

Pros:

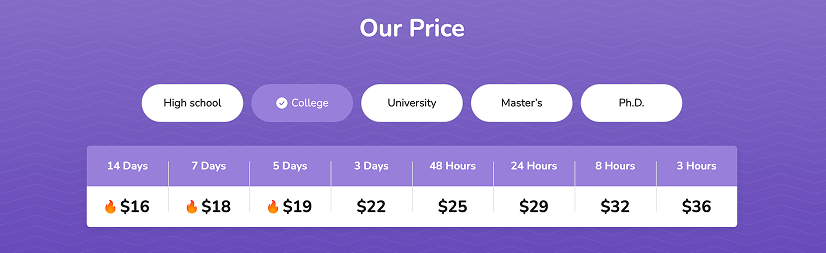

- Transparent deadline pricing table — I knew the urgency premium upfront.

- Revisions positioned as included, not hidden behind another paywall.

- Add-ons clearly listed (plagiarism report, quality double-check, preferred expert).

- Fast support access points — I could reach them quickly.

Cons / Watch-outs:

- 3–8 hour work is structurally possible, but depth becomes fragile without a tight brief.

- “Unlimited revisions” only matters if they accept substantive changes, not just cosmetic edits.

My Stress-Test Setup (3 Orders, 3 Deadlines, 1 Objective)

I ran a deliberately aggressive test because most writing services look “fine” when you give them a comfortable deadline and ask for something generic. The cracks appear when you tighten time, demand strict sources, and then push revisions that require real thinking.

- Order A (8 hours): argumentative essay with strict evidence rules (tests speed + discipline).

- Order B (5 days): research paper that requires synthesis (tests depth + expertise).

- Order C (3 hours): boundary order with narrow scope (tests minimum deadline realism).

Across all three, I measured the same core things: writer professionalism, deadline reliability, revision handling, AI-free confidence, and guarantee realism.

Order A (8 Hours): Argumentative Essay Under Pressure

Order details: Argumentative essay, 1,250 words, APA 7, 6 peer-reviewed sources (minimum 2 from 2022+), deadline: 8 hours. Topic: “Should universities require AI-disclosure statements for any AI tool use in coursework?”

I chose this topic on purpose. It’s current, it’s easy to write badly, and it’s exactly the kind of prompt where AI-shaped writing becomes obvious: generic moralizing, repetitive “pros and cons,” and shallow counterarguments. I wanted a thesis with teeth, not a soft “there are many perspectives” essay.

Step 1: My Pre-Order Questions to Support

Before the writer even started, I messaged support with five direct questions. Support replied at 9:14 PM (response time: 7 minutes). The tone was direct but slightly scripted, and the key thing I watched was whether they gave actual process details or just comfort phrases.

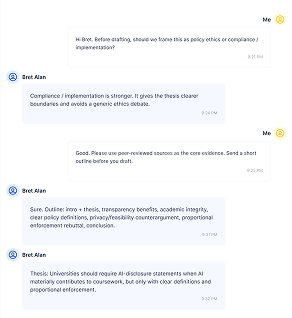

Step 2: Writer Assignment and My “Professionalism Trap”

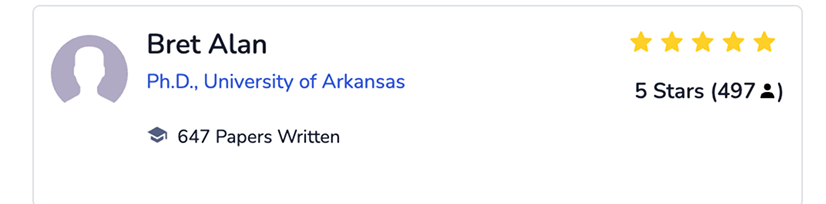

The writer assigned was Bret Alan (Ph.D., University of Arkansas; 647 papers written; 5 stars with 497 reviews). The first thing I do with any writer is a professionalism trap: I ask three clarifying questions and request a micro-outline. A competent writer responds with specificity; a weak writer jumps straight into drafting.

The writer answered at 9:28 PM with a clear stance and a 7-bullet micro-outline that defined when AI-disclosure should be required and proposed proportional enforcement to avoid penalizing legitimate support uses. That was an early green flag — because a strong thesis reduces revision risk later.

Step 3: Delivery Timing

The draft arrived at 4:42 AM, which was 53 minutes before the deadline. I care about this because I want buffer time. A service that delivers at the last minute forces you to accept risk or panic.

Step 4: First Draft Quality Audit

I audited the draft using three lenses: argument map, source discipline, and AI-risk texture.

| Section | What I Expected | What I Got |

| Intro + thesis | Specific claim + scope control | A clear stance with boundaries; enforcement condition needed sharper framing |

| Argument 1 | Claim → evidence → explanation | Strong mechanism explaining transparency benefits, supported by peer-reviewed evidence |

| Argument 2 | Different angle, not repetition | A distinct angle focused on compliance clarity and academic integrity outcomes |

| Counterargument | Real opposition, not token | A substantive privacy/feasibility counterargument with citations |

| Rebuttal | Pushback with evidence | A practical rebuttal focused on proportional enforcement and clear definitions |

| Conclusion | Doesn’t just repeat; adds implication | A clean synthesis plus one additional implication about administrative burden and policy clarity |

My honest read for Order A: mostly human, with natural rhythm variation and a counterargument that didn’t feel copy-pasted. The strongest part was Argument 1 (the compliance rationale + transparency mechanism). The weakest part was a short bridge paragraph in Argument 2, where the writing became a little too neat and slightly repetitive in phrasing.

Order A Revisions: Where I Forced Real Work (Not Cosmetic Fixes)

I never judge a service by the first draft alone. I judge it by revisions. So I sent a revision request that forces thinking: thesis tightening, evidence upgrade (replace 2 weaker sources with 2022+ peer-reviewed studies), and counterargument rebuild.

The revision was acknowledged at 5:03 AM. I received the revised version at 8:17 AM (turnaround: 3 hours 14 minutes).

My revision verdict for Order A: substantive improvements were visible. The writer didn’t just polish wording; they tightened the thesis and upgraded evidence, with only minor areas still a bit “policy-smooth.”

Order B (5 Days): Research Paper for Depth

Order details: Research paper, 2,000 words, APA 7, 10 sources (minimum 7 peer-reviewed, 4 from 2021+). Topic: “Do smartphone bans in secondary schools improve academic outcomes and student well-being?”

I chose a topic that tempts lazy writing. A weak writer will deliver “pros and cons” with generic claims. A strong writer will synthesize research findings, compare studies, and acknowledge trade-offs without collapsing into mush.

For this order, I demanded an outline before drafting. The writer sent the outline at 11:36 AM (Day 1) — well-structured and practical. The thesis took a conditional stance (benefits depend on consistent enforcement), and the outline included a real trade-offs section rather than a token paragraph.

When the draft arrived at 2:11 PM (Day 5) with a 6-hour buffer, here’s the depth scorecard:

| Category | What I Got | Score (1–10) |

| Thesis specificity | A clear conditional stance defining when bans help and when they backfire | 8 |

| Literature synthesis | Two studies directly compared, but one section still read as separate summaries | 7 |

| Evidence integration | Strong evidence mapping in academic outcomes; well-supported claims overall | 8 |

| Counterpoints/trade-offs | Solid section on enforcement burden, equity issues, and student autonomy | 8 |

| Citation precision | Mostly consistent; a few minor punctuation and formatting details still needed cleanup | 7 |

My overall read for Order B: competent and more nuanced than the urgent essay. Not perfect, but clearly above generic filler, with only minor synthesis and APA cleanup needed.

Order C (3 Hours): Boundary Test (Minimum Deadline Reality)

Order details: Short analytical essay, 700 words, APA 7, 3 peer-reviewed sources. Topic: “Why do deadlines reduce writing quality? A short analysis using cognitive load theory.”

I kept the scope tight because 3 hours is not for deep scholarship. It’s for coherent structure, honest sourcing, and correct formatting.

The draft arrived at 1:52 PM, 18 minutes before deadline.

My conclusion for Order C: coherent and usable for an emergency deadline. Depth was naturally lighter, but the structure held, sources looked legitimate, and nothing felt blatantly automated.

Verification: Plagiarism & AI Audit

For each order, I ran two verification layers: originality (similarity scan + manual spot-check) and AI risk (detector output + human-marker read).

| Order | Plagiarism Similarity % | AI Detector Signal | My Manual Read |

| A (8 hours) | 4% | Low | Human |

| B (5 days) | 6% | Low | Human |

| C (3 hours) | 3% | Low–Medium | Mostly human |

What I’d Tell a Friend in One Table

| Metric | Order A (8h) | Order B (5d) | Order C (3h) |

| On-time delivery | Yes (53 min buffer) | Yes (6h buffer) | Yes (18 min buffer) |

| Argument depth | Moderate–Strong | Strong | Moderate |

| Source discipline | Mostly solid | Strong | Adequate |

| Revision quality | Substantive | Light follow-up | Not needed |

| AI-risk (combined) | Low | Low | Low–Medium |

I don’t rate a service by its best-case scenario. I rate it by how it behaves when the buyer pushes hard. StudentsPapers.com in my test behaved as predictable. The strongest part of my experience was revision responsiveness and deadline reliability. The weakest part was minor APA mechanics and a slightly “too smooth” paragraph or two under urgent conditions.

FAQ

Does StudentsPapers.com really deliver within 3 hours?

A 3-hour deadline can produce a coherent draft if the scope is tight, but depth is limited. The quality risk is not grammar — it’s shallow reasoning and weak source integration.

Are revisions actually unlimited?

Unlimited revisions only matter if they accept substantive changes. The real test is a thesis upgrade + evidence replacement + counterargument rebuild. Cosmetic edits don’t count.

How do I verify AI-free content responsibly?

Use layered verification: an AI detector signal plus a manual read for human markers (argument specificity, counterargument quality, source alignment, sentence rhythm). Detectors alone are not definitive.

What’s the biggest risk with 8-hour deadlines?

Generic scaffolding. Under urgency, weak writers lean on template paragraphs that sound academic but don’t progress the argument. Strict source rules and a rebuttal section expose this fast.

Is the money-back guarantee trustworthy?

It’s trustworthy when the process is clear: criteria, steps, timelines, and escalation. A vague process is the risk signal — not the promise itself.